TL;DR – This post offers a practical checklist to help you diagnose why your website has lost visitors. It guides you through common issues like crawl problems, broken links, lost backlinks, security hacks, hosting and content errors so you can systematically identify and fix what’s driving traffic away.

Losing Visitors? – Your SEO Problem Solving Checklist – Pro Tips!

- Check your robots.txt to ensure search engines can crawl important pages.

- Look for crawl errors in Search Console and fix them quickly.

- Fix broken URLs using proper redirects.

- Review lost backlinks and recover valuable links where possible.

- Scan your site for hacks or malware.

- Review any recent site migrations or hosting changes.

- Identify and resolve duplicate content issues.

- Improve hosting performance if your site is slow or unstable.

- Avoid over-optimised or unnatural backlink profiles.

- Improve page titles and meta descriptions to increase click-through rates.

Many people are trained in the art of Search Engine Optimisation, but many people struggle with problem-solving an account. We have all been there. We spend a long time marketing a website, building up the content and visitors slowly over time, and in one fell swoop, you see a bulk of your traffic disappear.

Time to hit the panic button? No, not just yet. Some answers could be straightforward, and you will overlook the obvious solution.

First, if you know you have gone within the Google guidelines, you must create a checklist and cross them off one at a time.

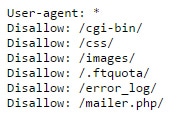

Check your robots.txt

Your website’s robots.txt file will block search engine robots from accessing vital files such as your CSS style sheets and other files. Therefore, Google cannot process your website correctly. In addition, if Google cannot access your CSS files, it cannot determine whether your website is mobile-friendly. Consequently, you will lose a little ground with mobile search queries.

The robots.txt file, or robots exclusion protocol, is a web server text file specifying which directories and files to crawl and which to avoid. It can be used for a variety of reasons:

- To prevent duplicate content from being crawled or indexed by search engines.

- To disallow access to sensitive files like administrative pages and login credentials.

- To create error messages for Googlebot when it encounters blocked URLs.

Search engines will ignore any rules in robots.txt that they don’t understand or don’t want to follow (for instance, if you specify that all files are off-limits except for a single directory).

The syntax of this file is simple: each rule consists of a set of directives followed by an asterisk and then one or more tags separated by spaces. The tags tell the search engine what action to take when encountering a URL that matches that rule.

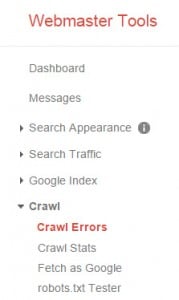

Check for any crawl errors

In Google Webmaster Tools, you can check for crawl errors. It will be updated on your website or a WordPress plugin you have uploaded. We often play around with site speed, and some plugins we have used have interfered with the website’s JavaScript. Therefore, our Google Analytics script was not tracking correctly.

Crawl errors will tell you of any 404 errors on your website. We recently had a client who had lost 30% of her visitors to her eCommerce store, and we found through Webmaster Tools that she had accidentally changed the permalink of one of her highly trafficked landing pages.

The simple solution was to 301-redirect the new URL to the old one and update the permalink.

Crawl errors occur when Googlebot cannot access a page on your site. If your site is inaccessible to Google, it will not be able to find or index any of your pages.

The following are common crawl errors:

- 404 (Page Not Found)

- 403 (Forbidden)

- 500 (Internal Server Error)

- 503 (Service Unavailable)

Crawl errors are a common issue when crawling a website. Crawl errors occur when a page can’t be crawled because it’s inaccessible to Googlebot, the crawler used by Google Search and other Google products, or because it returns an error when Googlebot tries to access it.

If your site shows crawl errors in your search console account, you should fix them as soon as possible, as they can lead to indexing issues and lost traffic.

Check your backlinks

You may have lost a highly relevant backlink pointing to your website. You can use backlink-checking tools such as Ahrefs.com to show you any lost backlinks. One lost backlink can cause a heavy loss of visitors, so do not underestimate their power. Keep regularly checking for lost backlinks to find a replacement.

Ahrefs is a powerful tool for SEOs and digital marketers. We will show you how to use it to find backlinks from your competitors and how to analyse them.

- Enter the URL you want to analyse in the search bar at the top of the page.

- You can also enter a competitor’s URL and click “Get Backlinks” to see their backlink profile.

- Click on “SEO” in the sidebar (on the left side of the page), then select “Backlinks.”

- This will bring up a list of all backlinks pointing to your website or a competitor’s website from different sites/pages. The number next to each URL indicates how many times an external site has linked back to your site (or your competitor’s site).

Has someone hacked you?

We have seen many websites hacked. What usually happens is that hackers upload files into your website hosting account, creating pages that point to affiliate products. They then build a lot of spammy backlinks in bulk.

Google is usually quite lenient with hacked websites, but sometimes it can cause problems, especially if they mix up spammy backlinks to your homepage. However, that is not as common.

The biggest problem with WordPress is that it’s so popular. The more users there are, the more hackers will be attracted to it. Hackers know one way to get into your site is by exploiting a known vulnerability in WordPress (or any other software). So they look for vulnerabilities and try to exploit them. Here are some of the most common security issues:

• Malware/spam – Occasionally, you may see an increase in spam or malware on your site. This is usually caused by someone finding a vulnerability in your installation that lets them upload files without permission.

They then fill those files with spam or malware and hope someone visits the page and downloads their code onto their computer. If this happens, you should remove the file immediately (see below).

• Phishing – Phishing is a type of hacking where attackers try to trick people into giving up sensitive information like passwords, credit card numbers, or Social Security numbers through email or other means, such as fake websites designed to look legitimate.

Have you migrated recently to a new server?

So, you have a .com domain and migrated to GoDaddy from a UK host? How does Google know which country to send you visitors from? Unless you set your international targeting to the UK, it is possible they will not send you UK visitors.

You are on a US IP address, and a .com is a global domain. It would be much wiser to be on a .co.UK domain and a UK-based hosting company.

Server migrations are no fun. They’re stressful, time-consuming, and often result in data loss or downtime. But they can be made less painful if you’re prepared. The key is to plan ahead of time and have a plan B to fall back on if something goes wrong during the migration process.

Here are some tips for successfully migrating your servers:

Make sure you have access to the old server’s files and databases. You don’t want to discover halfway through your migration that you can’t access the old server’s data. If so, contact your hosting provider immediately and ask for help getting into those files and databases.

Test the new server before going live. It’s important to ensure everything works correctly on the new server before switching over permanently from the old one. If anything goes wrong during this testing phase, it will be much easier (and cheaper) to fix now than later when all your customers use it 24/7.

Checking for duplicate content

As discussed in a previous post on duplicate content, you need to check that your content is safe. Unfortunately, content theft exists, and many scraper sites linger in the dark corners of search engines, so you need to keep your content safe and regularly check Copyscape to see if this has happened to you.

Duplicate content is a problem for search engines. It’s not just a problem for users who have to sift through irrelevant results but also for the search engines themselves. Duplicate content can lead to duplicate rankings and lower the overall quality of search results.

For example, your website has two pages containing an article titled “How to Lose Weight Fast.” These two articles are identical except for the title, so they’re both considered duplicate content by Google.

- Duplicate content can occur in many different ways, including:

- Copying text from another source and pasting it into your page

- Using the same images on multiple pages (often with slight variations)

- Using scripts or plugins that generate blocks of content on your page that is copied from other websites

Are you on shared hosting?

Many people do not realise this, but you probably share your server with hundreds of other websites, and one of these websites may be slowing it down. If this happens, it slows down the entire server, affecting all websites.

So, for example, if someone is trying to load your website from a slower 3G network and your server is slow, they will give up and move on to another website. Also, a common problem with a shared server is a high volume of spam email sent from the mail server.

Shared hosting is a type of web hosting that uses a single server to host multiple websites. The term “shared” refers to the server that many other sites use. Shared hosting is affordable but the slowest option because all sites share the same resources (storage space, bandwidth, etc.).

You’re probably on shared hosting. That’s the norm for most people. Shared hosting is the most affordable way to get a website online, and it works well for small sites and blogs.

If your site gets lots of traffic or requires more resources, shared hosting might not be ideal. It can also be frustrating if you’re trying to get away from a shared server and need more control over your site.

If you’re looking to upgrade from shared hosting, here are some options:

VPS (Virtual Private Server)

VPS gives you more control over your server than shared hosting, but costs less than dedicated servers or managed WordPress hosting plans. A VPS can be a backup plan if your shared host goes down or you need more resources than your shared host offers.

Virtual Private Server (VPS) is a term used to describe a virtual server, a virtual machine hosted by a physical server. VPS hosting lets you run your applications and site builders on your private server rather than sharing resources with other users.

Dedicated servers

Big businesses use dedicated servers because they have all the power they need. They cost more than shared hosting and VPS plans because their hardware and resources are available exclusively to that account holder, but if your site needs it, this is the way to go.

We offer a range of dedicated servers, from small entry-level packages to larger enterprise-class systems.

A dedicated server is a server that is not shared with other users and has its own physical hardware. It is designed to host websites and other services requiring full root access on Linux or Windows operating systems.

A dedicated server provides more resources than a shared hosting plan, such as CPU power, RAM, storage space, and bandwidth. It also comes with a higher cost than shared hosting plans.

Are you over-optimising your backlinks?

Many people are stuck in the historical era of search engine content marketing and are still building low-quality backlinks with keywords as anchor text simply because they do not know any other way.

If you are hiring somebody cheap to save a bit of money, that is precisely the service you will receive, a cheap one, and that affordable service will end up costing you more in the long run.

Over-optimisation is an Achilles heel in any SEO service, and if you over-optimise a keyword, you will see your website going the wrong way. And one thing that is harder than building backlinks? Getting them removed.

Some SEOs are obsessed with having the perfect backlink profile. They spend hours building links and testing as much as possible to achieve the highest possible rankings. However, this can be counterproductive if you over-optimise your backlink profile and lose rankings.

A good example is when people create many blog networks, which usually work well for short-term gains but don’t always last in the long term. Over the last few years, many changes at Google have caused blogs that were previously ranking in the top 10 for competitive keywords to drop out of sight completely.

This can happen because Google has become very good at identifying unnatural patterns and removing sites based on those patterns from its index.

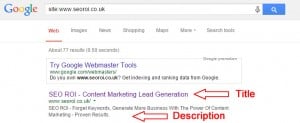

The title and meta descriptions are too long.

Quite a while ago, Google rewrote your title and meta descriptions, and there is only a limited number of characters you can use. Therefore, if a title or description is too long, Google will rewrite it, which can affect the click-through rate of your listing.

Remember, your description becomes the snippet in your listing when someone types a search query related to your website; therefore, it is imperative that you make it stand out with a strong call to action.

Meta descriptions are the short descriptions for your content that appear in search results. Each page on your site should have a unique meta description. The purpose of meta descriptions is to help users understand what they can expect to find when they click through to your page.

It’s important to ensure that the text in your meta description is relevant and accurate so that your users aren’t disappointed when they visit your page.

The title of a web page or post should be descriptive enough that someone could understand what they’re going to read without clicking on it, yet not too long as to be unreadable. A great title will be both descriptive and concise, but it can also be fun or catchy!

Written by Terry Burrows, an experienced freelance SEO consultant. I have been helping businesses improve search visibility through practical, data-driven optimisation since 2002. My work focuses on measurable results, ethical SEO practices, and strategies that support sustainable long-term growth.